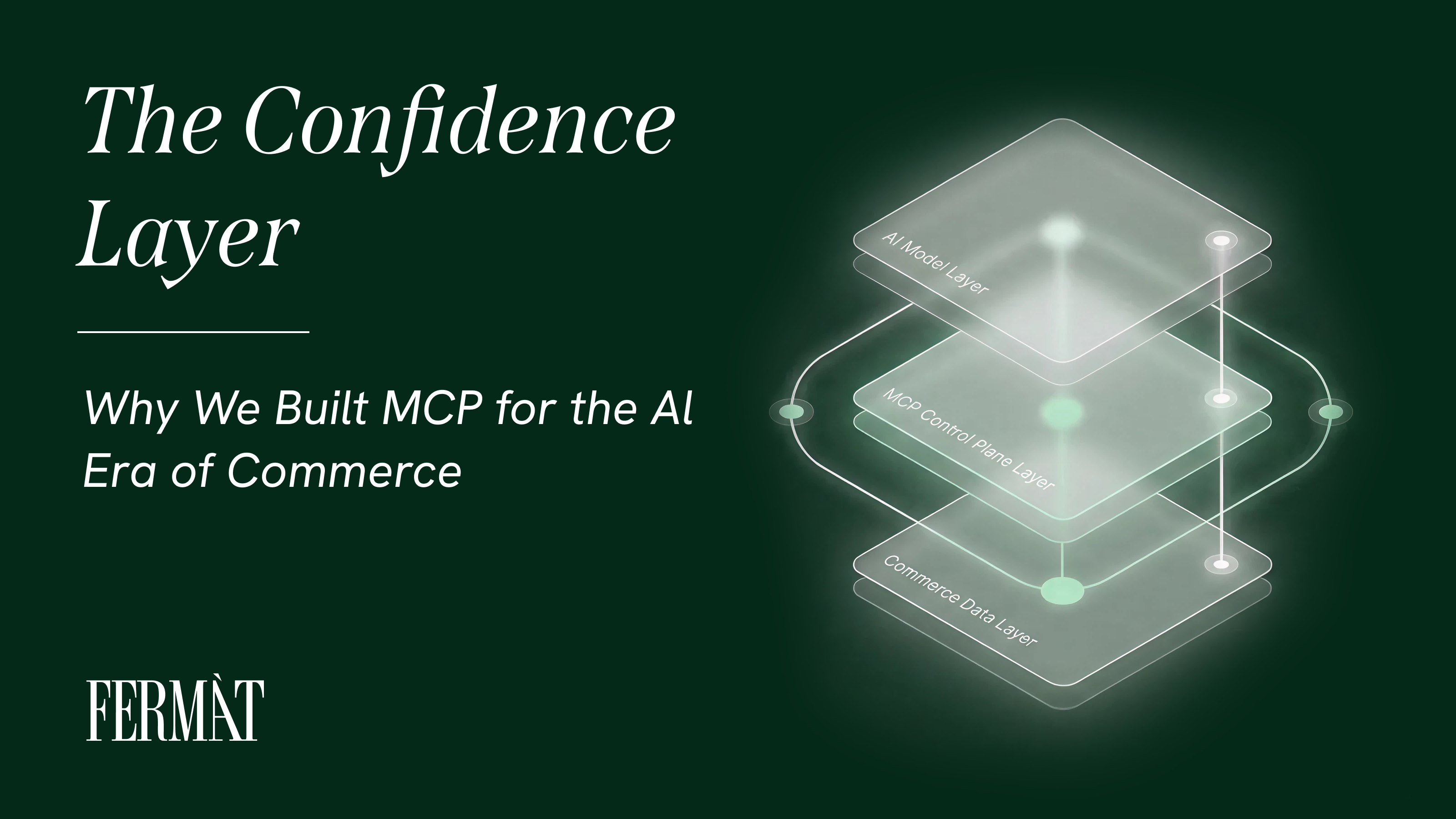

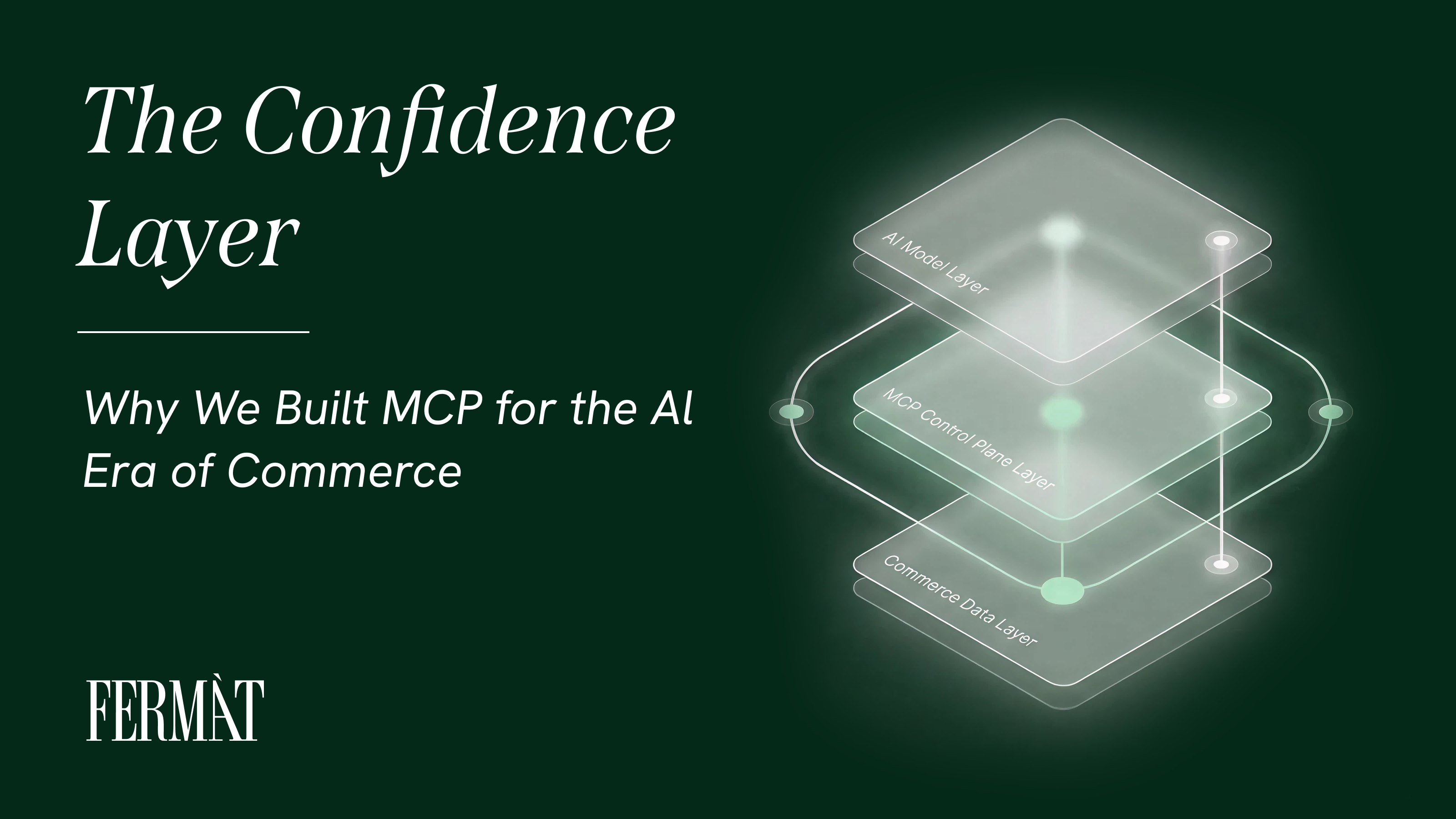

The Confidence Layer: Why We Built MCP for the AI Era of Commerce

AI isn't failing in commerce because it lacks intelligence. It's failing because it lacks structure.

Every enterprise we talk to is running real AI evaluations and looking for ROI-the ‘experiment’ era is over. AI search visibility, deploying copilots, evaluating models, and seeing agentic workflows with ROI are a must; 78% of organizations now use AI in at least one business function, up from 55% in 2023. But beneath the deployment is a deeper anxiety, the question has shifted from "Can AI work?" to "How do we scale this without losing control?"

One of the ways the expectation is shifting - is the need to use data from multiple systems to deploy into the agentic workflows that a given team or vendor operates. Context is king, and everyone expects seamless use of their data for their AI workflows.

That's why we built our own Model Context Protocol (MCP) layer at FERMÀT.

We built it as a confidence layer, the control plane that sits between AI experimentation and revenue execution. Because the barrier to scaling AI across commerce isn't capability. It's trust.

AI conversations in the boardroom are rarely about model quality. They're about risk. CMOs and CDOs aren't asking, "Can this model generate copy?" They're asking, "We know we have to get the efficiency, but what happens if this goes wrong?"

What happens if we send traffic to an unproven experience? If a model update shifts behavior overnight? If revenue dips and no one knows why?

Most AI tools respond with more dashboards, more metrics, more visibility. But dashboards only quantify anxiety, they don't resolve it. What operators actually need is controlled exposure: the ability to test new AI-driven experiences without putting the entire business at risk. That's a gap that 78% of enterprises say they're struggling to close when integrating AI with existing systems.

CMO confidence in generative AI has reached 83% in 2025. The appetite is clearly there. But appetite without a safe way to act on it creates a dangerous pattern: ambitious pilots that stall at 5% traffic because no one trusts the system enough to scale them further.

We designed MCP to sit on top of your commerce data and FERMÀT's generative experience platform, coordinating low-risk, high-learning cycles. Instead of betting the business on a full rollout, brands can take a measured approach: spin up a new AI-driven search or contextual commerce variant. These experiences can then be monitored via a client through the MCP and you can ask contextual questions around the outcome and map it to all of your other business priorities–not isolated to the context of measurement inside the tool.

This shifts the operating model from "I check everything manually" to "I check when something actually changed based on full business context." That's the difference between reactive monitoring and structured confidence.

Consider what this means in practice. A brand testing agentic checkout experiences can validate performance against its existing merchant product feeds before committing to a broader rollout. If the AI model powering that experience gets updated, MCP catches the behavioral shift before it reaches 100% of traffic. If conversion rates hold, without other issues, the brand scales with data behind the decision, not a gut feeling. Given that AI-powered product recommendations already drive a 10–15% lift in conversion rates, the upside of getting this right is significant. But so is the downside of getting it wrong without a safety net.

"Operators don't need another isolated AI experiment," says Rishabh Jain, CEO of FERMÀT. "They need to know that a given result actually roles up to a good business outcome. They need assurance that if a model changes, they don’t have unintended consequences. MCP is our answer to that moment of operational trust."

Every enterprise we speak with is about to make an AI decision they'll live with for the next two to three years. Which model do we standardize on? Which agentic workflows do we embed? Which tools become part of our operating system?

These aren't small decisions. AI is moving from side experiment to core infrastructure. Once embedded into workflows, retraining teams and rewiring systems gets expensive fast. The risk isn't choosing the wrong model today, it's hard-wiring your business logic to a single vendor that may not be the right choice tomorrow.

This fear is well-founded. 33% of enterprise leaders cite vendor lock-in as a top concern, and 23% report their AI tools don't integrate with existing systems at all. Meanwhile, 37% of enterprises now deploy five or more AI models in production, up from 29% in 2024, making the switching costs and integration complexity only steeper. As one enterprise leader recently put it, "All the prompts have been tuned for one provider...changing models is now a task that can take a lot of engineering time".

MCP speaks your commerce data. It speaks your AI models, whether that's Claude today or something else tomorrow. And it allows you to introduce new workflows, new variants, and new agents without ripping out your stack.

Instead of locking your business into a single AI vendor or a brittle workflow, MCP keeps the execution layer flexible. Your commerce logic stays stable. Your experimentation layer stays adaptable. Your AI layer evolves as the market does. In a landscape where enterprise software vendors are deliberately deepening lock-in through AI bundling strategies, that flexibility becomes a strategic asset, not a nice-to-have.

The broader MCP ecosystem reflects this demand for interoperability. The Model Context Protocol reached 97 million monthly downloads by end of 2025, a 970x increase from just 100,000 downloads in November 2024. Major platforms—Microsoft, OpenAI, Google, AWS, Cloudflare—have all integrated MCP into their stacks. The market is clearly signaling that the era of monolithic, single-vendor AI is ending. What's replacing it is a composable, protocol-driven approach where brands retain control over how AI connects to their data, their experiences, and their revenue.

That's exactly the architecture we've built at FERMÀT. Our MCP layer doesn't just connect AI models to commerce data. It gives operators the confidence to actually use them, to test, learn, scale, and adapt without the fear that one wrong move will crater their numbers.

In a moment where everyone is rushing to adopt AI for commerce, we believe the most durable competitive advantage is optionality. Not just intelligence. Not just experimentation. Control.

That's the role of MCP, the confidence layer between AI ambition and revenue reality.